Scrambled Keys

F5.

F5.

F5 F5 F5.

WTF?

Why isn’t this running?

F5 F5.

Grrr…

Run->Debug.

(wait)

(wait)

F10.

F10.

Why is that making the File menu highlight?

F10.

…

F11.

WTF?! It restarted?

Tip of the day: If you install the C/C++ Developer Tools in Eclipse, it’ll come with a set of Visual Studio key bindings.

December 7, 2010 1 Comment

Pizza Pizza

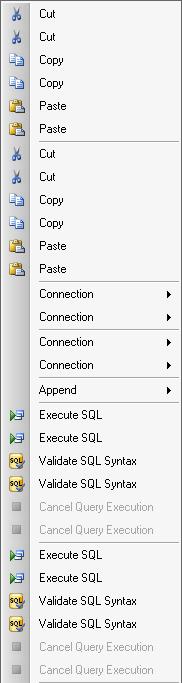

Visual Studio never fails to come up with amusing ways to go horribly wrong.

And yet somehow, “Append” is immune.

May 18, 2010 No Comments